The AI Tools Landscape in 2026: There's Now a Tool for Almost Any Need

Seven AI coding tools in 18 months. So why are you still doing everything yourself?

If you are a technical founder in 2026, you have more AI firepower available than a fully staffed engineering team had five years ago. Codex writes code from natural language. Claude Code refactors entire modules in a single pass. Cursor autocompletes faster than you can think. Copilot ships PRs from issue descriptions. Devin promised to replace your first hire entirely.

And yet.

You are still the one triaging bugs at 11pm. You are still the one who context-switches from debugging to investor deck to customer support ticket. You are still, somehow, wearing every hat.

This is not a critique of any specific tool. Every one of them is genuinely impressive. But if you are trying to decide which ones deserve your time (and your $20/month), you need a clear map of what is actually out there, what each one is good at, and what none of them do.

That is what this post is for.

The field, as of March 2026

Here is every serious AI development tool a startup founder should know about, what it does, and what it costs.

OpenAI Codex

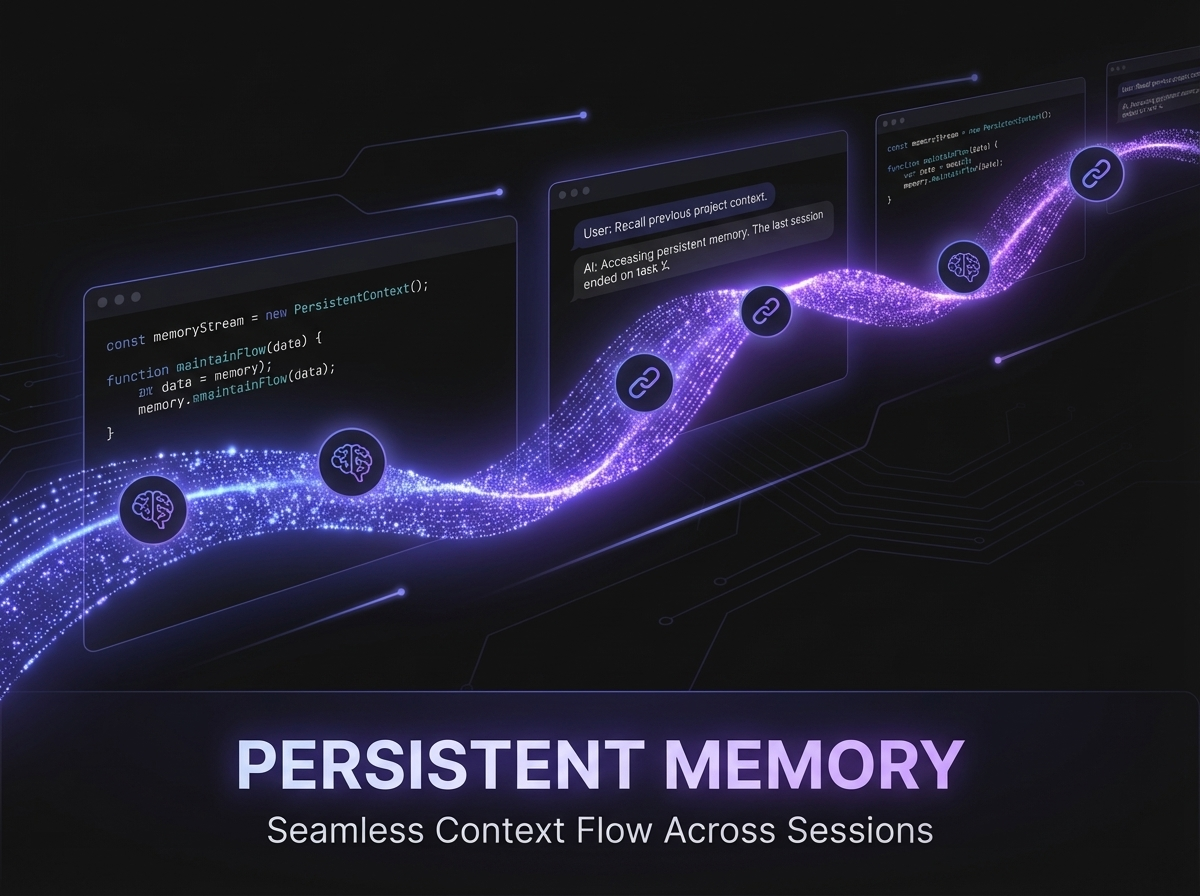

OpenAI's dedicated coding agent, launched as a CLI tool and cloud-based coding environment integrated into ChatGPT. Codex can spin up parallel sub-agents that each work in sandboxed cloud environments. It resumes threads, so you get some continuity across sessions, but there is no persistent project memory — each new session requires re-establishing context.

Best for: Quick prototyping, boilerplate generation, one-off coding tasks. Cost: $20/month via ChatGPT Plus. Limitation: All sub-agents are code-focused. It cannot plan your sprint, review your architecture, or figure out why your landing page converts at 0.8%.

Claude Code (Anthropic)

A terminal-native coding agent that understands deep codebase context. Claude Code reads your project structure, respects your conventions via a CLAUDE.md file, and builds up auto-memory across sessions. It scores 80.8% on SWE-bench Verified — the highest benchmark score of any coding tool available today. Agent Teams can spawn multiple instances working in parallel.

Best for: Deep refactors, complex multi-file changes, developers who live in the terminal. Cost: $20 to $200/month depending on usage tier. Limitation: Incredible at engineering. Does not touch anything outside engineering.

Cursor

A fork of VS Code with AI woven into every part of the editing experience. Supermaven autocomplete feels almost telepathic — multi-line predictions with full project context. The new Background Agents feature runs up to 8 parallel agents on cloud VMs with git worktree isolation. Cursor also recently launched Automations, an event-triggered feature that hints at workflow-level thinking.

Best for: Developers who want the richest IDE experience with AI baked in. Cost: $20 to $200/month. Free tier with 2,000 completions. Limitation: Multi-model support (Claude, GPT, Gemini) is a real advantage, but the 8 background agents are 8 copies of the same role: coder. Struggles with large monorepos.

GitHub Copilot

The most accessible entry point to AI-assisted development. Copilot lives inside VS Code and JetBrains as a plugin, offers Agent Mode for multi-step tasks, and its Coding Agent can autonomously create pull requests from GitHub issues. Agentic Code Review adds automated PR feedback.

Best for: Teams already deep in the GitHub ecosystem who want minimal friction. Cost: Free for open source, $10 to $39/month for individuals and businesses. Limitation: Tightly coupled to GitHub. Agent capabilities are narrower than dedicated tools like Claude Code or Cursor.

Windsurf (Codeium → Cognition)

An AI-native IDE that was acquired by Cognition (the Devin team) in December 2025 for roughly $250 million. The plan is to integrate Devin's autonomous capabilities into Windsurf's IDE experience. As of March 2026, that integration is still in progress.

Best for: Worth watching if Cognition delivers on the Devin+IDE vision. Cost: $15 to $60/month. Limitation: Post-acquisition integration is still happening. The product's identity is in flux.

Devin (Cognition)

Marketed as the "first AI software engineer." Devin v2.0 dropped its price from $500/month to $20/month and introduced ACU-based (agent compute unit) pricing. It runs fully autonomously in a cloud sandbox — you describe a task and it plans, codes, tests, and deploys.

The reality is more nuanced. Independent testing found Devin failed 14 out of 20 real-world tasks. It works best on well-scoped, contained problems. Give it ambiguous requirements or a messy codebase and it struggles.

Best for: Clearly defined, contained coding tasks where full autonomy is desirable. Cost: $20/month base + ACU costs. Limitation: Cloud-only (your code leaves your machine). Autonomy is a double-edged sword — when it fails, debugging an AI's decisions is harder than writing the code yourself.

OpenClaw

The wildcard. An open-source autonomous AI agent that went from zero to 250,000 GitHub stars in months — the fastest-growing open-source project in history. It runs locally, connects through messaging platforms (Telegram, Discord, WhatsApp), and has 100+ built-in automation skills.

OpenClaw is not specifically a coding tool. It is a general-purpose agent that can automate workflows, manage files, control APIs, and execute system tasks. In China, it became a national phenomenon — Tencent, Alibaba, and Baidu all rushed to offer one-click deployment.

Best for: Tinkerers and automation enthusiasts who want a local AI agent they fully control. Cost: Free (MIT License) + LLM API costs ($5-50/month typical). Limitation: Serious security concerns. Researchers found 40,000+ exposed instances, a "ClawJacked" vulnerability that let websites hijack local agents, and malware in the community marketplace. The creator left the project to join OpenAI. Proceed carefully.

What all seven tools have in common

Step back and look at this list as a whole. Despite wildly different approaches — terminal agents, IDE integrations, autonomous cloud workers, open-source local agents — every single one converges on the same thing:

They all make a single person faster at coding.

Codex writes code. Claude Code writes code. Cursor writes code. Copilot writes code. Devin writes code. Even OpenClaw, the most general-purpose of the bunch, is primarily used to automate developer workflows.

This makes sense. Coding is the most obvious bottleneck in software development, and it is the easiest task to benchmark and measure. SWE-bench scores sell products.

But if you are a startup founder — not a developer at a large company with a PM, a QA team, a marketing department, and a DevRel hire — coding is only one of a dozen hats you wear. And no amount of faster code generation fixes the other eleven.

The gap nobody is filling

Here is a thought experiment. Think about the last week of building your product. What percentage of your time was actually writing code?

For most founders I have talked to, the answer is somewhere between 20% and 40%. The rest is:

- Project management — triaging issues, prioritizing the backlog, deciding what to build next

- Architecture decisions — "should we use WebSockets or SSE?", "how do we structure the auth layer?"

- Quality assurance — manually testing the happy path, writing tests after the fact (or not), catching regressions

- Marketing — writing blog posts, managing social media, figuring out SEO, crafting launch copy

- Community and support — answering Discord questions, responding to bug reports, onboarding users

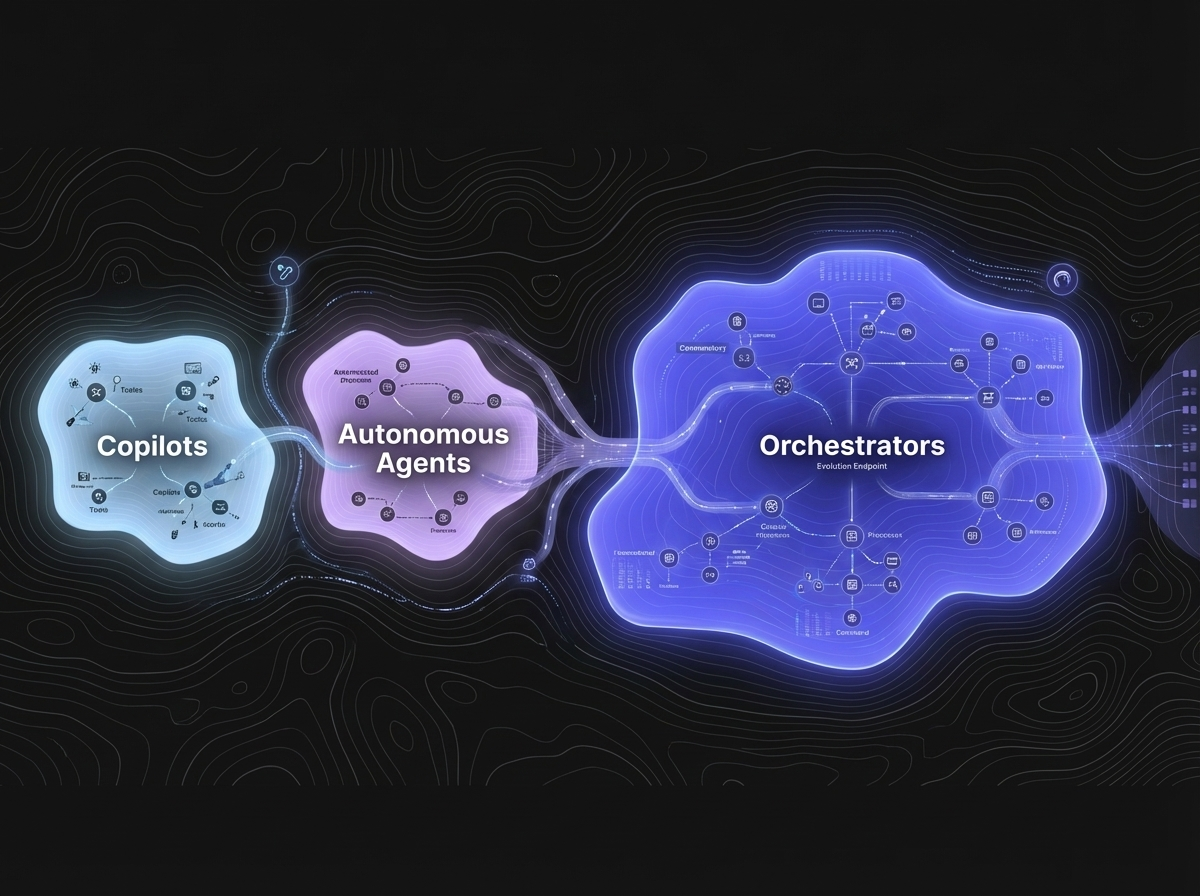

Every AI tool on the market accelerates the 20-40% of your time spent coding. Nothing touches the other 60-80%. (We broke down how multi-agent orchestration fills this gap in a separate deep-dive.)

This is the gap.

It is not a gap that gets filled by making coding agents faster, or by adding more parallel coding agents, or by giving coding agents better memory. It is a category gap. The market built seven variations of the same role and left five other roles completely empty.

What would filling the gap look like?

If you have worked at a startup that had even a small team — say, five or six people — you know the difference it makes. Not because each person is faster than you at their specific task (sometimes they are not), but because work moves forward on multiple fronts simultaneously without you being the bottleneck.

The PM keeps the backlog organized and prioritized. The architect catches design problems before they become technical debt. The engineer ships code. QA catches bugs before users do. Marketing makes sure anyone knows the product exists.

No one person could do all of that at the quality level a team achieves. The value is not in individual speed — it is in coordination and coverage.

A few projects are starting to explore this. Operum is building a system with six specialized AI agents — PM, architect, engineer, tester, marketer, and community manager — that coordinate through an automated pipeline. The idea is that instead of one AI making you a faster coder, you get a team that covers the full product lifecycle.

Whether that specific approach works at scale is still being tested. But the direction is interesting because it acknowledges a truth the rest of the market has ignored: the bottleneck for solo founders and small teams is not code generation. It is everything else.

Cursor's new Automations feature is another signal. By adding event-triggered workflows, Cursor is moving beyond "write code faster" toward "automate the process around code." It is a small step, but it suggests the market is starting to see the same gap.

How to think about this as a founder

If you are deciding where to invest your tool budget in 2026, here is a practical framework:

If you need to write code faster, any of the top tools will help. Claude Code leads on benchmarks. Cursor leads on IDE experience. Copilot leads on accessibility. Pick the one that fits your workflow.

If you need to automate tasks, OpenClaw is powerful but risky. Consider whether the security trade-offs are acceptable for your startup's IP.

If you need to build custom AI workflows, LangChain and Amazon Bedrock give you the infrastructure — but expect weeks or months of engineering to get something production-ready.

If you need to stop being a one-person team, that is the problem nobody has cleanly solved yet. Multi-agent coordination that covers the full product lifecycle — not just coding — is where the next wave of real productivity gains will come from. And when those agents share persistent context across sessions, the compounding effect is even more dramatic.

The tools exist to make any individual task faster. What is still missing is something that makes the whole operation move forward without you being the single point of failure.

That is the most interesting unsolved problem in AI tooling right now.

Aleksandr Primak is the founder of Operum, a multi-agent AI team for builders and founders.

Related Posts

- Building an AI Agent Orchestrator: How 6 Specialized Agents Coordinate Through GitHub — The technical deep-dive on how multi-agent coordination actually works.

- Why "Never Loses Context" Changes Everything — Why persistent memory across sessions is the missing piece in AI tooling.

- Why Your Enterprise AI Strategy Needs an Orchestration Layer — How this architecture scales to enterprise requirements.